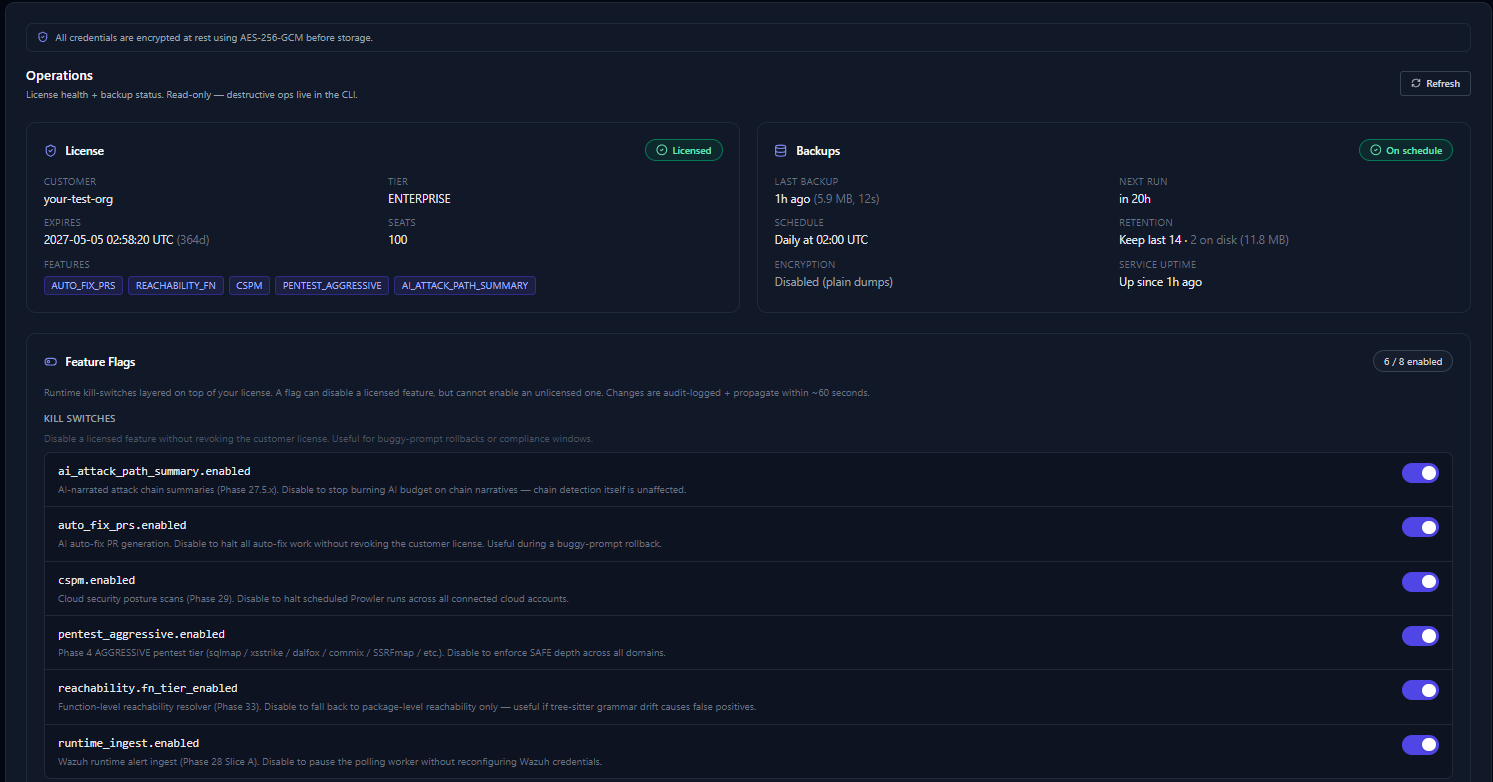

Multi-AI BYOA auto-fix

Pick your AI provider per-org: Anthropic Claude, OpenAI GPT, Google Gemini, or local Ollama. AI generates a unified-diff patch, opens a PR, and reports verdict back via GitHub Check Runs.

- ✓ Operator-controlled AI choice — never locked to one vendor

- ✓ Patch validation: rejects off-target hunks (5-line slack)

- ✓ Per-finding cost transparency in the dashboard

vs the convention: Most auto-fix platforms lock you to one AI vendor. We let your operator pick — and switch — per-org.